The Moat in Vertical AI Is Buried in the Dirty Work Nobody Wants to Do | SVTR Thesis

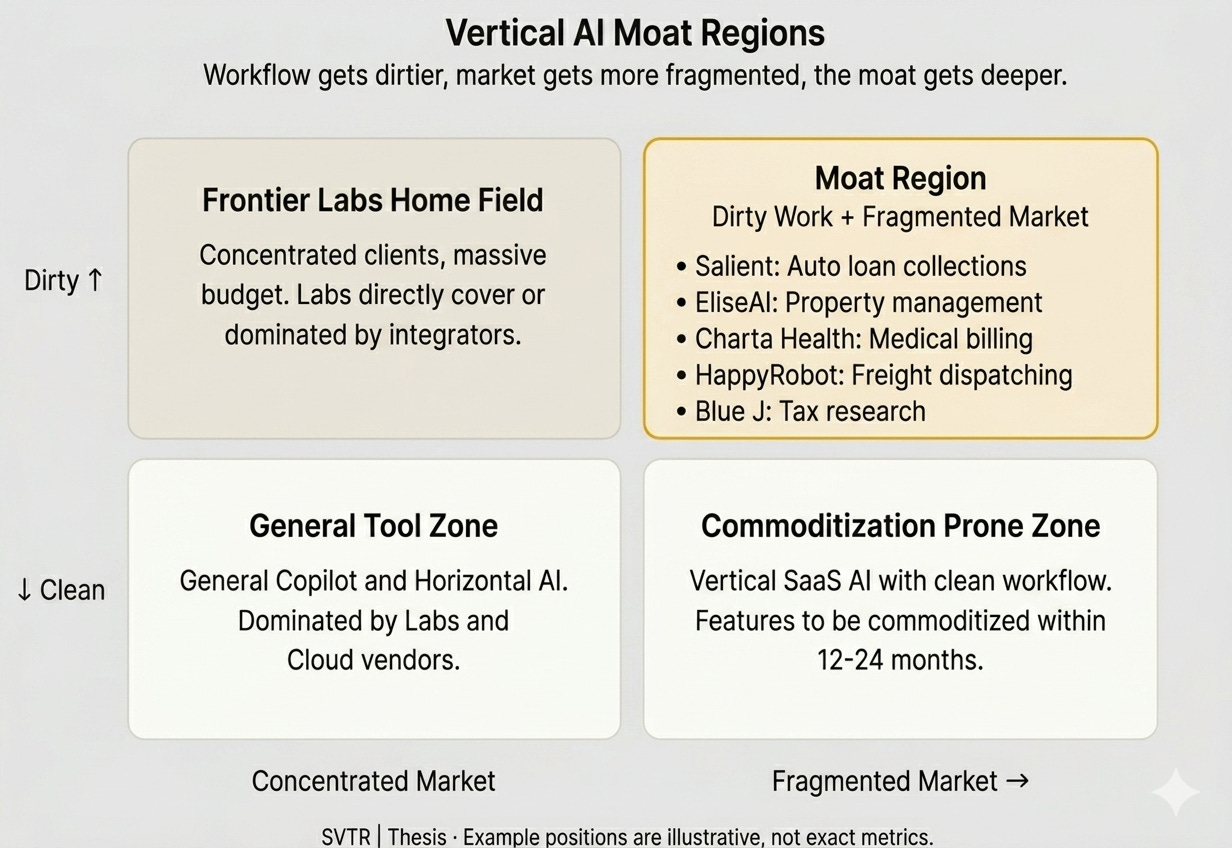

The next $10-billion Vertical AI company won’t be born in the sexiest category. It will emerge from the kind of market a VC’s instinct tells them to skip on first glance: industries where workflows are messy, buyers have minimal technical sophistication, and customers are so fragmented that no one wants to knock on doors one by one. These markets look too small, too chaotic, too unlike a venture-scale opportunity. But it is precisely this quality of “not looking like one” that will form the most impregnable moat of the next decade.

The underlying logic is this: as model capabilities commoditize rapidly, the defensibility of the AI application layer is migrating from “intelligence” to “operational depth.” A clean, well-defined, easily standardized workflow means any player with access to the same model can replicate what you do within six months. A workflow stuffed with exceptions, compliance requirements, manual approvals, and legacy system integrations demands that a company spend years rebuilding its organization, data pipelines, and processes from scratch. That rebuilding, in itself, is the new moat.

I. Clean Workflows Have No Future

Over the past two years, Vertical AI has been one of the highest-consensus themes in Silicon Valley venture circles. But companies flying the same “Vertical AI” banner are splitting into two paths with entirely different destinies.

The first type sells a vertical AI product: injecting intelligence into a specific task — faster contract review, more accurate medical summaries, smarter customer service replies. These products demo beautifully, deploy quickly, and can reach $5–10M ARR faster than any prior generation of SaaS. But their problem is structural: when a task is clean enough, the risk low enough, and the integration with existing systems loose enough, it means a model upgrade lets competitors replicate you; a slightly technical customer builds it in-house; and if a frontier lab decides to enter, they simply overwrite you. The purer the task, the faster the replacement.

The second type is doing something fundamentally different. They are not selling “task intelligence” — they are taking over the entire workflow end-to-end: from data ingestion, system integration, approval processes, exception handling, and compliance frameworks, all the way to capturing the full budget a customer used to spend on human labor. On the surface, these companies appear to be building “AI for X.” In substance, they are rebuilding the operational chassis of an entire vertical industry. The former will be compressed into a feature within two to three years. The latter will become the infrastructure of their industry within ten.

What determines the fate of a Vertical AI company is not how smart its model is, but how deep into the dirty work it is willing to reach.

II. The Dirty Work Is the Moat

Using AI to handle auto loan collections doesn’t sound like a sexy thesis. But Salient is a company worth examining closely. What it does is deploy AI voice agents to call delinquent auto loan borrowers — a scenario locked down by three overlapping regulatory frameworks: the FDCPA, TCPA, and Reg F. A single violation can trigger a regulatory investigation. The AI must process federal and state regulations that overlap in real time across jurisdictions, negotiate payment arrangements during the call, comply with contact frequency limits, and know exactly when to hand the call back to a human agent. This is not “a task.” It is a tangled mess of operational, compliance, and technical problems all fused together.

But it is precisely this mess that lets Salient run a business where a human call costs over ten dollars and an AI call costs a fraction of that — and no one can easily take it away. Frontier labs won’t crouch down to chew through compliance and exception handling. Customer companies lack the engineering capacity to build production-grade AI in-house. Competitors, even with access to the same model, cannot bypass the thousands of edge-case workflows Salient has already accumulated.

The same logic keeps surfacing in corners that traditional VCs disdain:

Medical pre-bill auditing: Charta Health navigates the labyrinthine relationships between payer rules, CPT codes, and denial patterns.

Freight logistics dispatching: HappyRobot, Pallet, and Augment are replacing the endless phone calls and email coordination among carriers, shippers, and warehouses.

Tax research: Blue J has entered a $145 billion market populated by 46,000 accounting firms, the vast majority with fewer than ten employees.

These markets are overlooked for two reasons. First, the workflows are dirty. Dirty means resistant to abstraction, resistant to standardization, resistant to the top-down imposition of “general AI capabilities.” Second, the market structure is fragmented. Buyers are scattered across thousands of small-to-midsize operators who generally lack internal engineering teams, lack architectural judgment, and lack the instinct that “technology should be built, not bought.”

These two characteristics are two sides of the same coin: the very thing that makes a market overlooked is also what makes it defensible.

III. TAM Isn’t Underestimated — It’s Measured with the Wrong Ruler

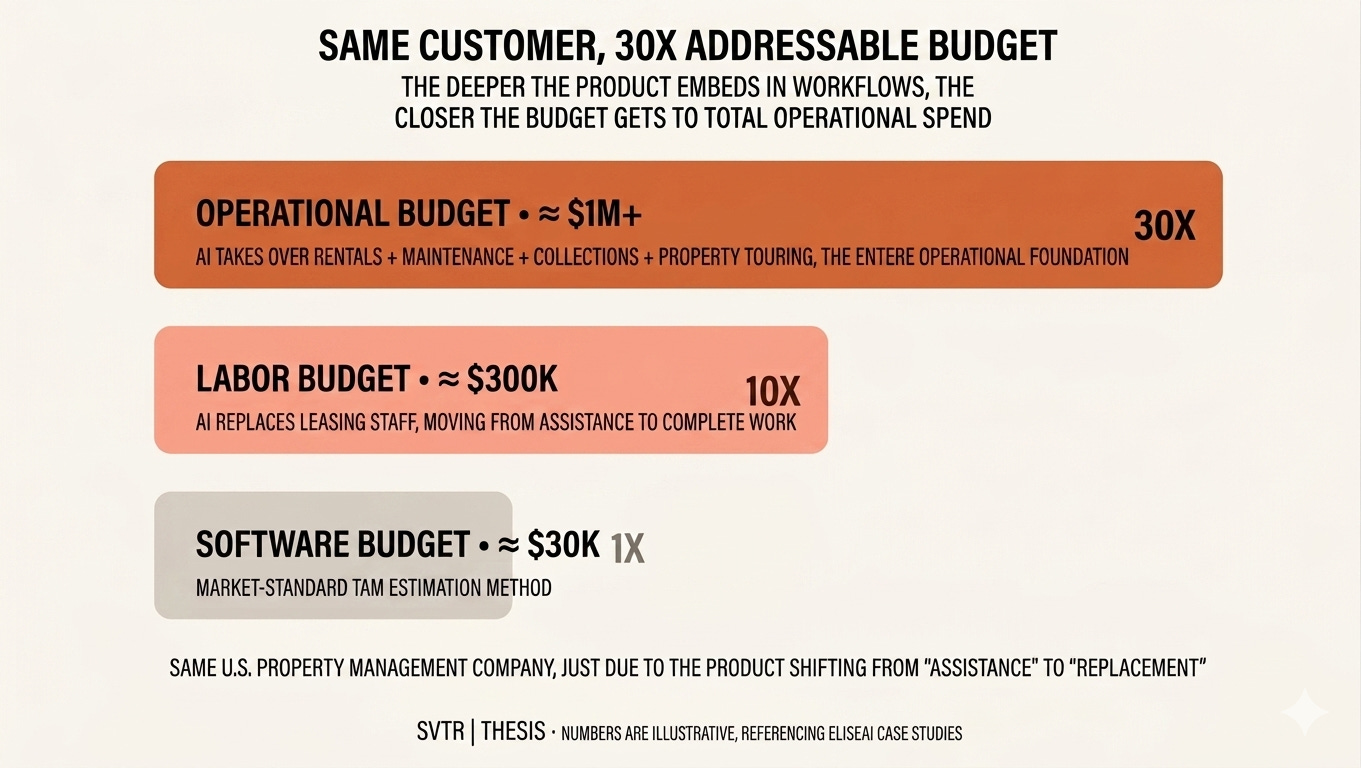

The deeper issue is that these markets aren’t actually small. They’ve just been measured with the wrong yardstick.

The mainstream VC approach to TAM estimation looks at how much an industry spends on software. Apply that ruler to “AI for property leasing,” “AI for medical billing,” or “AI for trucking dispatch,” and the numbers don’t look venture-scale. But this is a fundamentally misaligned ruler — in these markets, the money isn’t in the software budget. It’s in the labor budget, the outsourced services budget, the operational costs distributed across countless small positions.

A property management company might spend $30,000 a year on leasing software, but $300,000 on leasing staff salaries. When an AI product evolves from “assisting leasing agents” to “replacing leasing agents,” its sales target shifts from that $30,000 budget line to the $300,000 one. Push further — when the product expands to maintenance coordination, rent collection, and AI-guided tours — it reaches the entire property operations budget: a number north of $1 million. Same customer, same company; merely by deepening the product, the addressable budget expands by a factor of thirty.

EliseAI is the textbook case. It started as a leasing automation product with a $50,000 ACV — looking every bit like a ceiling-locked proptech play. But as it evolved from “assisting leasing” to “replacing leasing,” then extended into maintenance, rent collection, and AI-powered tours, it now serves one-eighth of all apartment units in America, with per-customer annual spend reaching the million-dollar range. The company itself has begun replicating the same playbook into healthcare’s $600 billion administrative cost pool. The market didn’t get bigger. The product revealed how big the market always was.

This mechanism also explains a critical paradox: why these markets can accommodate $10-billion companies yet won’t be directly targeted by OpenAI or Anthropic. Viewed through the software-spend lens, the market is too small for a frontier lab to justify mobilizing massive resources. Viewed through the labor-spend lens, the market is already large enough — but by the time it surfaces in public data as an “obvious opportunity,” the vertical player who has been grinding for five years will have already stacked up the data, integrations, and distribution.

The profitability model and organizational structure of frontier labs are simply not built for this work. They must continuously invest in the model frontier, must maximize token revenue (which directly conflicts with customers’ interest in moving toward agentic workflows), and cannot possibly build custom-grade applications for dozens of verticals in parallel. In most vertical markets, the AI application layer won’t be consumed top-down by labs. It will be won by vertical players willing to dig deep and trade focus for advantage.

IV. Implications for AI Investing

This thesis carries unmistakable implications for founders and investors navigating the AI wave.

For founders, the most dangerous temptation is to build something that “looks like AI”: a polished demo, a clean SaaS interface, a tidy workflow automation. These products will reach $5M ARR the fastest and get compressed into a feature the fastest. What’s truly worth building lies in directions that seem, at first glance, absurdly labor-intensive, hopelessly fragmented, and populated by buyers so unsophisticated you wonder if they’ll even sign a contract. It demands a temperament entirely different from the past decade of SaaS entrepreneurship: a willingness to make part of the company an “operations company” rather than purely a “software company,” a willingness to shoulder the dirty work of compliance, exceptions, and service delivery, and a willingness to accept lower margins than pure software in the early days in exchange for deeper customer embeddedness.

For investors, the screening mechanism needs an upgrade. The center of gravity in AI investing is shifting from “can the model do this?” to “is the workflow ugly enough?” A Vertical AI company worth backing should not have its TAM estimated by “software spending in the market” but by “the total labor and service costs the industry spends on this function.” A founder worth backing should not merely demonstrate the boundaries of model capability but the depth of their understanding of the industry’s dirty work: which exceptions account for 80% of the workload, which compliance requirements have been the Waterloo of every software company for the past decade, and which integrations truly prevent competitors from replicating.

It’s worth noting that this playbook carries an underappreciated divergence between the U.S. and China. The reason “replacing labor” drives such dramatic TAM expansion in the American market is that the multiplier between software budgets and labor budgets is enormous — per-customer labor spend is often ten times or more the software spend. China’s domestic labor cost structure compresses that multiplier, making per-customer expansion potential naturally narrower. But in reverse, Chinese teams have a natural lack of resistance to “doing operations, doing delivery, doing service” — precisely the part Silicon Valley founders are least willing to touch. We’re already seeing, within SVTR’s network, a cohort of Chinese-background teams bringing this operational resilience into America’s fragmented vertical markets. They may not have the strongest models, but they’re willing to go two steps further on the dirty work than their Silicon Valley competitors. In the Vertical AI generation, that willingness itself is the scarcest asset.

This is also the direction we continue to track: which industries have the most complete triangle of “heavy labor + high fragmentation + low tech DNA,” which teams truly understand the difference between “selling software” and “selling a system,” and which opportunities offer structural arbitrage between the U.S. and Chinese markets. The next batch of breakout companies will look, on the surface, less “AI” than many Silicon Valley darlings — but they will quietly take over the operational chassis of an entire industry.

Models win the demo. Wedges win the pilot. Systems win the market. The real bet in Vertical AI was never on the smartest end — it’s always been on the end most willing to do the dirty work.